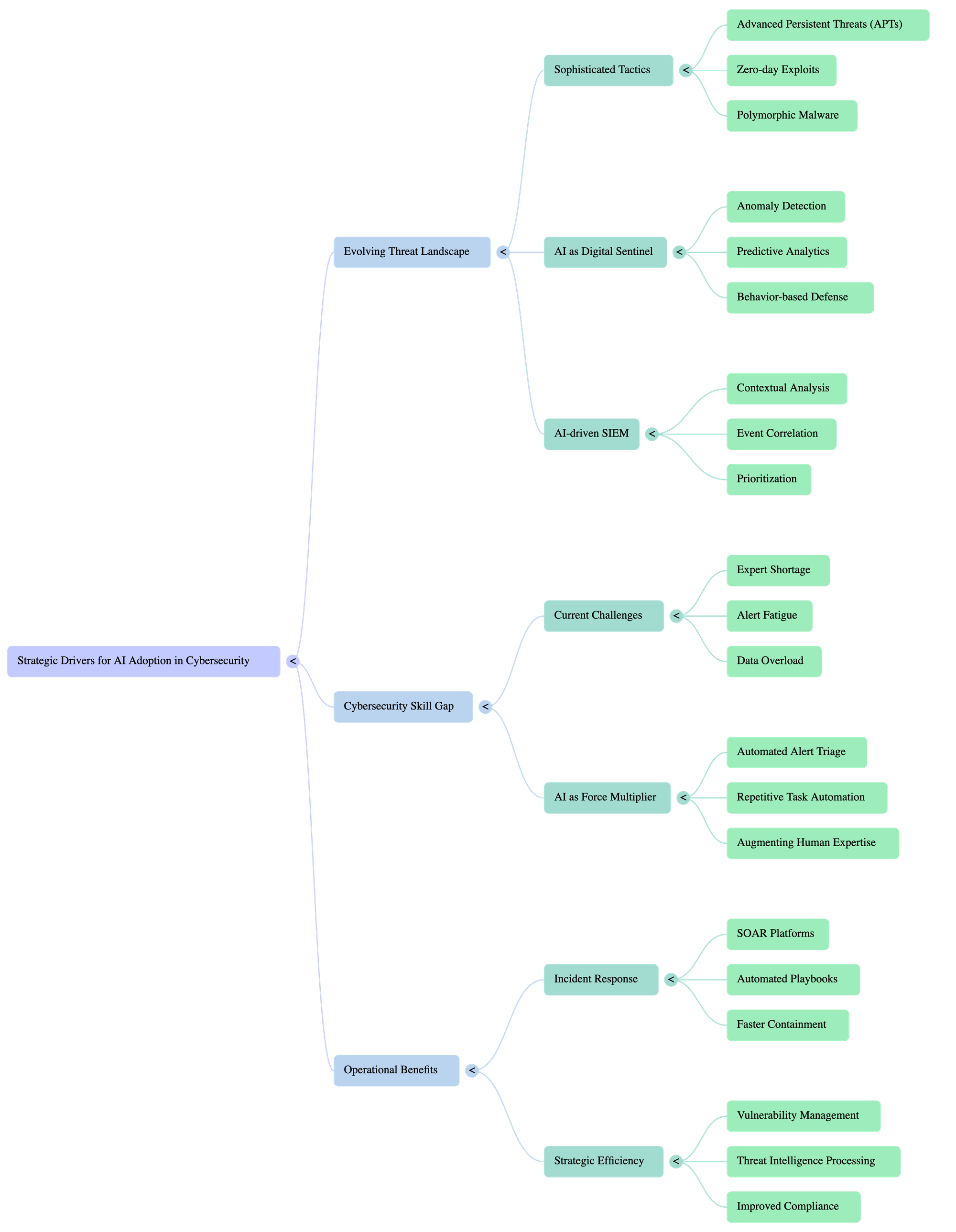

Strategic Drivers for AI Adoption in Cybersecurity

Learning Objectives

- Understand the core concepts of Strategic Drivers for AI Adoption in Cybersecurity

- Learn how to apply Strategic Drivers for AI Adoption in Cybersecurity in practical scenarios

- Explore advanced topics and best practices

Introduction

In an increasingly digital and interconnected world, the landscape of cybersecurity threats is evolving at an unprecedented pace. Traditional, signature-based security measures often struggle to keep up with sophisticated, polymorphic attacks, and the sheer volume of security data can overwhelm human analysts. This is where Artificial Intelligence (AI) emerges not just as a technological advancement, but as a strategic imperative for modern cybersecurity.

Strategic Drivers for AI Adoption in Cybersecurity refer to the fundamental business, operational, and threat-related factors compelling organizations to integrate AI into their security frameworks. These drivers aren't merely about adopting a new tool; they represent a strategic shift towards more proactive, intelligent, and efficient defense mechanisms. Understanding these drivers is crucial for organizations to make informed decisions about where and how to invest in AI, ensuring that technology choices align with overarching security goals and business objectives.

This module will delve into the critical factors propelling AI to the forefront of cybersecurity strategies. You will learn:

- What AI brings to the cybersecurity table: From automating mundane tasks to detecting never-before-seen threats.

- Why organizations are increasingly reliant on AI: Addressing challenges like skill shortages, data overload, and the escalating sophistication of attackers.

- How AI adoption translates into tangible benefits: Enhanced threat detection, faster incident response, improved compliance, and greater operational efficiency.

By the end of this module, you will have a comprehensive understanding of the strategic rationale behind AI adoption in cybersecurity, equipping you to identify opportunities and challenges in real-world scenarios.

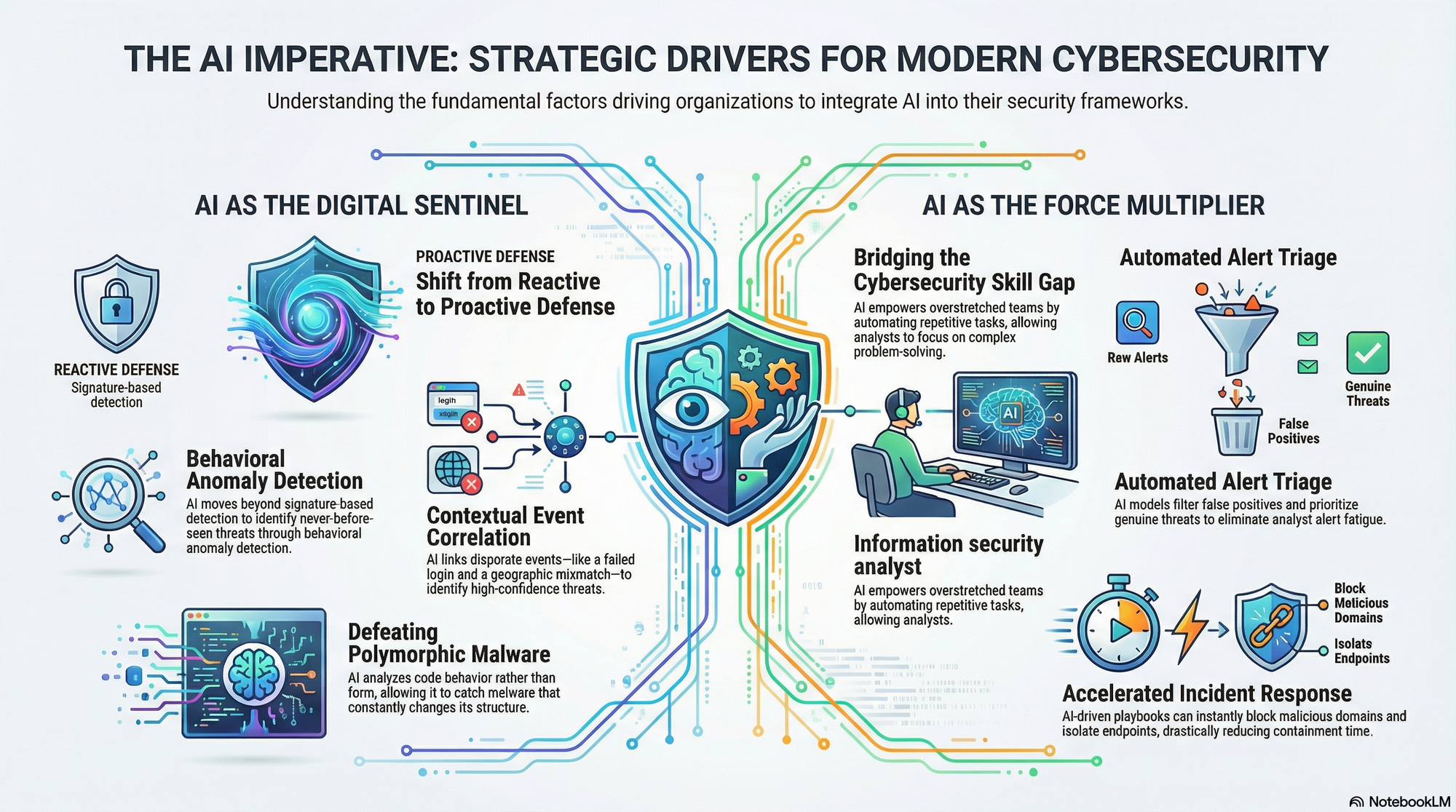

1. 🌐 The Evolving Threat Landscape: AI as Your Digital Sentinel

The digital battleground is constantly shifting. Cybercriminals are employing increasingly sophisticated tactics, including advanced persistent threats (APTs), zero-day exploits, and highly evasive polymorphic malware. Traditional security tools, often reliant on known signatures, struggle to detect these novel and adaptive attacks. This rapid evolution of threats is arguably the most significant strategic driver for AI adoption.

How AI Acts as a Digital Sentinel:

AI excels at identifying anomalies and patterns in vast datasets that would be impossible for humans to process. Machine learning algorithms can learn what "normal" network behavior looks like and flag deviations, even for threats that have never been seen before. This shifts security from a reactive, signature-based model to a proactive, behavior-based defense.

- Anomaly Detection: AI models can establish baselines for user behavior, network traffic, and system processes. Any significant deviation triggers an alert.

- Predictive Analytics: By analyzing historical attack data and threat intelligence, AI can predict potential attack vectors and vulnerabilities before they are exploited.

- Polymorphic Malware Detection: AI's ability to analyze code behavior and structure, rather than just signatures, makes it highly effective against malware that constantly changes its form.

Practical Example: AI-driven SIEM Correlation

Consider a Security Information and Event Management (SIEM) system enhanced with AI.

A traditional SIEM might alert on a single failed login attempt. An AI-driven SIEM, however, can correlate that single failed login with:

- An unusual access time for that user.

- A login attempt from a geographically improbable location.

- Concurrent access attempts to multiple sensitive resources.

- A recent phishing email targeting that user.

Individually, these might be low-priority events. Combined by AI, they form a high-confidence indicator of a sophisticated attack, such as credential stuffing or an insider threat.

# Conceptual pseudo-code for AI-driven SIEM correlation logic

def ai_security_event_analyzer(event_stream):

user_behavior_model = load_ai_model("user_behavior_profile.pkl")

threat_intel_feed = get_threat_intelligence()

for event in event_stream:

# Step 1: Basic Rule-based filtering (e.g., failed logins)

if event.type == "login_failure" and event.severity > 3:

print(f"Rule-based alert: {event.description}")

# Step 2: AI-driven contextual analysis

user_profile = user_behavior_model.predict(event.user_id, event.timestamp, event.location)

if user_profile["anomaly_score"] > THRESHOLD_ANOMALY:

print(f"AI Anomaly Alert: Unusual activity for user {event.user_id} (Score: {user_profile['anomaly_score']})")

# Step 3: Correlate with other events and threat intel

related_events = get_related_events(event.user_id, event.timestamp, time_window=600) # Past 10 mins

if any(e.type == "multiple_resource_access" for e in related_events):

print("High-Confidence Alert: Multiple resource access detected after anomaly.")

if event.ip_address in threat_intel_feed["blacklisted_ips"]:

print("Critical Alert: Login attempt from known malicious IP.")

# Step 4: Prioritize and recommend action

# ... (e.g., initiate automated response, notify security team)

Note: A visual aid illustrating the difference between traditional signature-based detection and AI-driven behavioral anomaly detection would be highly effective here. Perhaps a diagram showing network traffic with normal patterns vs. anomalous spikes identified by AI.

2. 🧑💻 Bridging the Cybersecurity Skill Gap: AI as Your Force Multiplier

The global demand for skilled cybersecurity professionals far outstrips the supply. Organizations face a persistent shortage of experts in areas like threat hunting, incident response, and security architecture. This skill gap leaves many organizations vulnerable, as their human teams are stretched thin and often overwhelmed by the sheer volume of alerts and tasks. AI offers a powerful solution by acting as a force multiplier, enhancing the capabilities of existing teams.

How AI Augments Human Expertise:

AI doesn't replace human analysts; it empowers them. By automating repetitive, low-value tasks and providing intelligent insights, AI allows security professionals to focus on strategic thinking, complex problem-solving, and critical decision-making.

- Automated Alert Triage: AI can filter out false positives and prioritize genuine threats, reducing alert fatigue for analysts.

- Incident Response Automation: AI-powered Security Orchestration, Automation, and Response (SOAR) platforms can execute predefined playbooks for common incidents, speeding up response times.

- Threat Intelligence Processing: AI can rapidly ingest, analyze, and contextualize vast amounts of threat intelligence from various sources, presenting actionable insights to analysts.

- Vulnerability Management: AI can help prioritize vulnerabilities based on actual risk and potential impact, guiding remediation efforts.

Real-World Application: AI for Incident Response Playbooks

Imagine a phishing attack. A human analyst would typically perform several steps:

- Identify the phishing email.

- Analyze its headers and links.

- Check if other users received similar emails.

- Block the sender and malicious URLs.

- Isolate affected workstations (if any).

- Communicate with affected users.

With AI and SOAR, many of these steps can be automated. An AI system could:

- Automatically detect a suspicious email (e.g., using natural language processing for content analysis, reputation checks for sender).

- Trigger a playbook to automatically scan mailboxes for similar emails.

- Block the sender's domain at the email gateway and firewall.

- Create a ticket for the security team with all relevant forensic data already gathered.

- Even initiate an automated scan of potentially affected endpoints.

This significantly reduces the time from detection to containment, freeing up the human analyst to focus on the more complex aspects of the incident, like root cause analysis or advanced threat hunting.

# Conceptual incident response playbook snippet using AI triggers

def ai_phishing_response_playbook(email_id, detected_threat_level):

if detected_threat_level == "HIGH_CONFIDENCE_PHISHING":

print(f"AI Triggered: High confidence phishing detected for email ID {email_id}.")

# Step 1: Automated email analysis and sender blocking

email_analysis_result = analyze_email_content(email_id)

block_sender(email_analysis_result["sender_domain"])

block_url_reputation(email_analysis_result["malicious_urls"])

print(f" - Sender '{email_analysis_result['sender_domain']}' and malicious URLs blocked.")

# Step 2: Scan for similar emails across organization

scan_results = scan_all_mailboxes_for_similar(email_analysis_result["fingerprint"])

print(f" - Scanned {len(scan_results['affected_users'])} mailboxes for similar threats.")

# Step 3: Isolate affected endpoints (if any user clicked)

if scan_results["clicked_users"]:

for user in scan_results["clicked_users"]:

isolate_endpoint(user.device_id)

print(f" - Endpoint '{user.device_id}' isolated due to potential compromise.")